Magic Beans:

It’s like we’re Jack in Jack and the Beanstalk chasing magic beans. We buy technology thinking it’s going to solve our problems. We get some beans, like them for a while, then go onto chasing more beans. The problem is us: what are we trying to do. –g. joseph

This information is based on a large financial global corporation with a large number of network tooling technologies.

The purpose of this section is to present aspects of the collection of network engineering tools in an effort to develop a tool architecture and model.

Contents

- Current Tools in Relation to Protocol Layer

- Future Tools in Relation to Protocol Layer

- Future Tools in Relation to Logical Model

- Requiem for a Tool Architecture

- The Network Engineering Tools Model (company undisclosed)

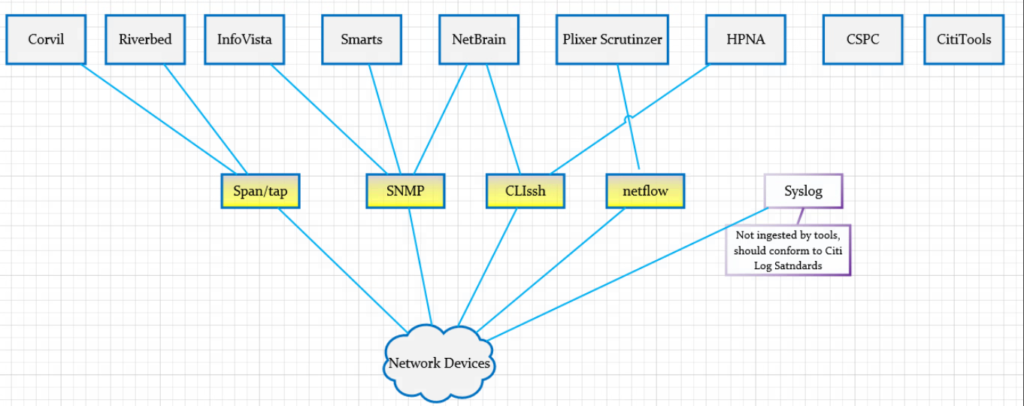

Current Tools in Relation to Protocol Layer

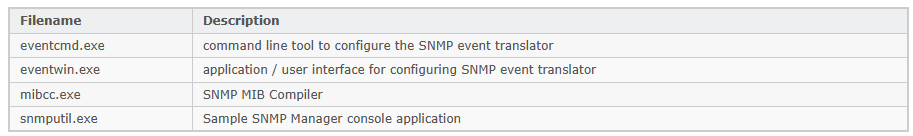

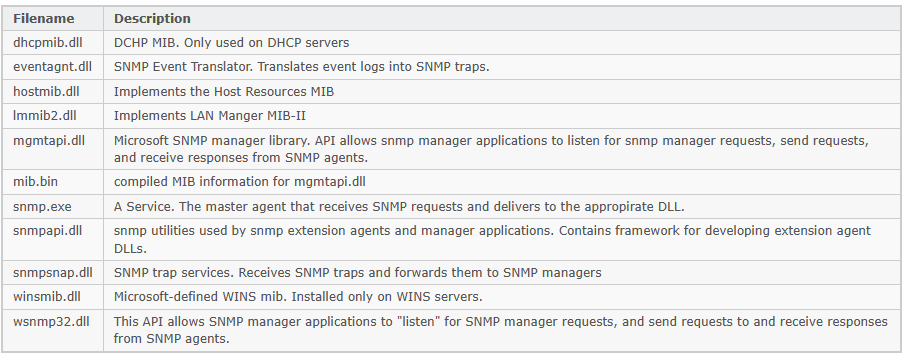

The following diagram shows an overview of the current tools along with protocols (i.e. SNMP; we stretch the definition beyond typical OSI layering). This relationship helps with the simple perspective of “what goes with this” and “what goes with that”. Simple but important questions can be raised such as: “Why are there multiple tools are using SNMP.”

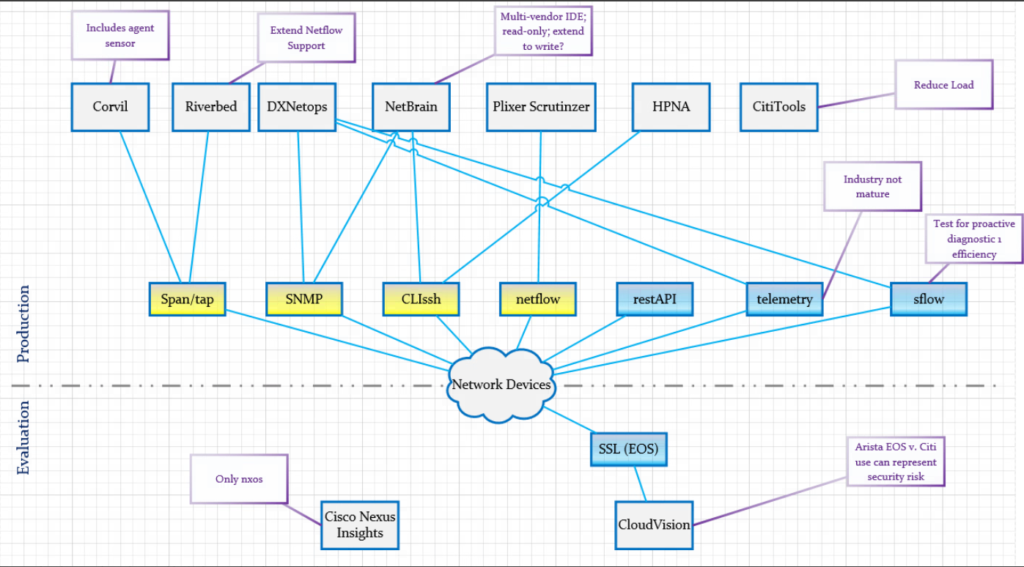

Future Tools in Relation to Protocol Layer

The following diagram extends the previous diagram to include future tools the company was considering. The blue-components of the protocol layer show new protocol-tool relationships.

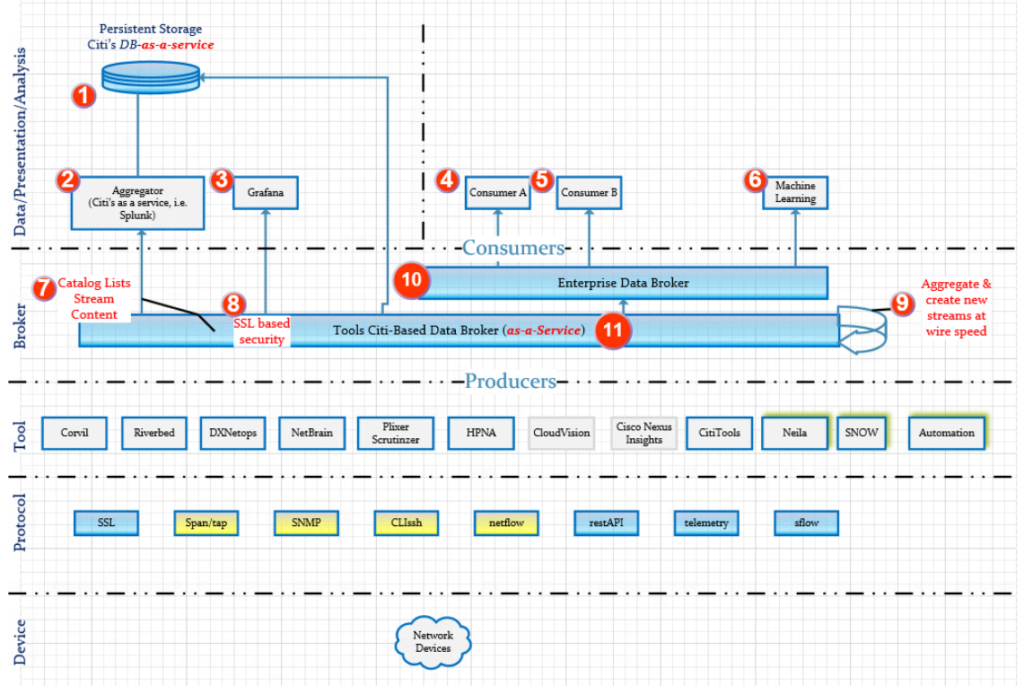

Requiem for a Tool Architecture

The following diagram represents a Tool Architecture with a big-data feel. The intention is to produce responsive data at scale and off-load the burden of production information from the tools. The numbers appearing in red are indices in the table below the diagram.

| Index | Explanation |

| 1 | Persistent storage for tools if required. MongoDB preferably offered as SAS (software-as-a-service) is a likely candidate. |

| 2 | Aggregatory for the purpose of converting ephemeral data to long term data requirements. Splunk is a likely candidate as a first choice with Elastic Search coming in second (due to no official Kafka support for an elastic search connector). |

| 3 | Grafana due to user demand. It is not a first choice to support an enterprise scale because of the lack of official support for a Kafka connector. It does ingest data from Elastic Search |

| 4 | Consumer A is a general consumer attached to the Enterprise-Data-Broker. |

| 5 | Consumer B is a general consumer attached to the Enterprise-Data-Broker. |

| 6 | Machine learning should naturally be a consumer of the Enterprise-Data-Broker in order to correlate different data streams as desired. A correlation coefficient could also be computed to measure the relatedness of the streams to each other. |

| 7 | A catalog mechanism of the various data streams to provide a library of the different data streams. |

| 8 | SSL-based security. Consumers and produces would have to have the correct CN for access. |

| 9 | Desired: The aggregator that lives with-in the data broker (i.e. Kafka streams) to combine streams for efficient at optimum speed and produce. This removes the burden of latency of consumers ingesting multiple streams. |

| 10 | Enterprise Data Broker (i.e. Kafka). Not controlled by Tools Staff. Consumes data from the Tools Data Broker |

| 11 | Tools Data Broker |

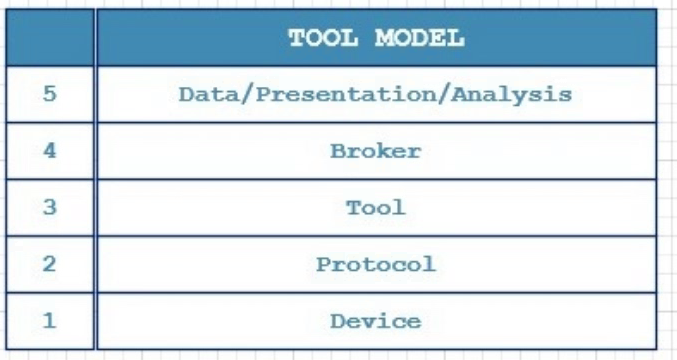

The Network Engineering Tools Model

The following diagram is a natural conclusion from the previous sections. It is similar to the concept found in the 4-Layer TCP or 7-Lay OSI models. It should serve as the starting point of “what we are trying to do” at a high level as opposed to starting “somewhere in the middle” with a magic bean (new tool).